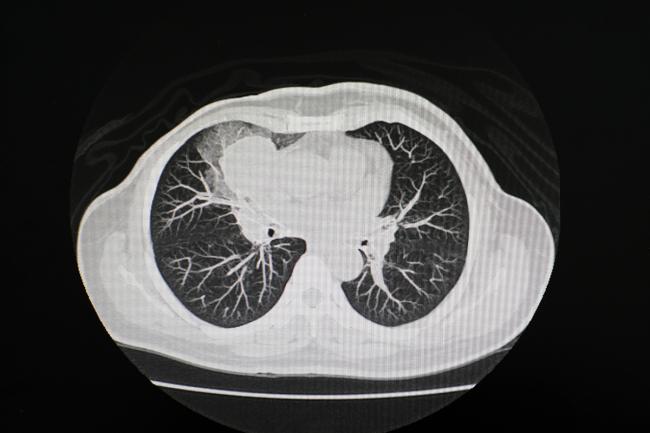

Automated CT scan analysis could fast-track clinical assessments

NIH-funded research suggests AI-powered tool could streamline diagnoses and unveil early markers for chronic disease.

Key points

- Core point: NIH-funded research suggests AI-powered tool could streamline diagnoses and unveil early markers for chronic disease.

- Key detail: NIH-funded research suggests AI-powered tool could streamline diagnoses and unveil early markers for chronic disease.

- Institutional origin: separate announcement from evidence.

NIH-funded research suggests AI-powered tool could streamline diagnoses and unveil early markers for chronic disease. The institutional report frames the development in practical terms and ties it to the broader mission or observing effort.

It is relevant because biology becomes more informative when an observed effect begins to look like a mechanism rather than an isolated pattern. The gap between identifying a correlation in biological data and understanding the causal chain that produces it is routinely underestimated, and the history of biomedical research is populated with associations that collapsed when the mechanism was sought and not found. A result that comes with a proposed mechanism, even a partial one, is more useful than a purely descriptive finding because it generates testable predictions that can narrow the hypothesis space. Rich datasets like this are necessary to push the limits of what artificial intelligence models can accomplish in medicine,” said Bruce Tromberg, Ph. Merlin represents a new class of models, commonly referred to as foundation models, that are trained using large-scale, unlabeled datasets, which span many kinds of information.

In the new work, the researchers tested Merlin across six broad categories of activities, spanning more than 750 individual tasks that entailed diagnostics, prognostics, and. To prepare Merlin for the wide breadth of tasks, the researchers initially trained it on their clinical data trove which connected more than 15, 000 3D abdominal CT scans paired.

The researchers then quizzed Merlin on more than 50, 000 previously unseen abdominal CT scans, coming from one of four different hospitals, to learn how closely their model could. Merlin tackled some tasks, such as predicting diagnosis codes, head-on, while other more complicated tasks, such as drafting radiology reports from scratch or identifying and.

On average across 692 different diagnostic codes, Merlin successfully predicted which of two scans was more likely to be associated with a particular code over 81% of the time. The study authors found that, when comparing scans from different subjects, Merlin could identify patients who were at higher risk of developing a particular disease in the next.

The broader interest lies in whether the reported effect points toward a real mechanism and not merely a reproducible but unexplained association. Biology has learned from decades of biomarker failures that correlation, even robust correlation, is not a substitute for mechanistic understanding. A pathway that can be traced from molecular interaction to cellular response to organismal phenotype provides a far stronger foundation for intervention than a statistical association discovered in a large dataset, however well the statistics are done.

Our model and the data will provide the community a robust backbone to build upon,” said senior author Akshay Chaudhari, Ph. From here, the sky’s the limit. ” This research was supported by NIBIB through grants R01EB002524 and P41EB027060, by the Medical Imaging and Data Resource Center (MIDRC) under.

Because the account originates with NIH News Releases, it functions best as a primary institutional report that is close to the data and operations, not as independent scientific validation. Institutional communications are produced by organizations with legitimate interests in presenting their work in a favorable light, which does not make them unreliable but does make them partial. Details that complicate the narrative, including instrument limitations, unexpected failures and results below projections, tend to be minimized relative to progress messages. Technical documentation and peer-reviewed publications, where they exist, provide the complementary layer that institutional releases cannot substitute.

The next step is to test whether the effect repeats across different methods, cell types, model organisms and experimental conditions. Reproducibility is the first test, but mechanistic dissection is the second, and a result that passes both has a substantially better chance of translating into something clinically or biotechnologically useful. The path from a laboratory finding to an applied outcome typically takes a decade or more, and most findings do not complete it; the current result sits at the beginning of that process.

Original source: NIH News Releases